Artificial intelligence is a touchy subject. Some fear AI’s potential to take jobs away from workers, while others embrace the convivence it could bring to their everyday lives. Tense conversations about AI span from the most niche tech message boards to the halls of Congress, but if used and regulated correctly, computer scientist Mark Finlayson sees AI being a valuable tool in the future. Finlayson is an Associate Professor at Florida International University who specializes in AI and cognitive science, and one of his main research interests is getting AI to better understand narratives. Storytelling is one of humanity’s oldest forms of communication, and Finlayson posits that discovering how to give AI a better understanding of our stories will grant it a stronger grasp on language and take the technology a step closer to true artificial intelligence.

Crafting a narrative is a creative process, but Finlayson says AI has yet to produce any creativity on its own. He sees AI as an asset to creatives, but for now, they still need to slot their own ideas in to get anything worthwhile out.

Crafting a narrative is a creative process, but Finlayson says AI has yet to produce any creativity on its own. He sees AI as an asset to creatives, but for now, they still need to slot their own ideas in to get anything worthwhile out.

Transcript of our edited discussion below:

Hernandez: ChatGPT and Stable Diffusion seem to be the usual suspects for discussions on AI. These programs can write essays and generate pictures in seconds, but they have also been shown to take from other sources from all over the internet. Will their lack of originality keep AI away from creative jobs, or could AI still take over?

Finlayson: That’s a multi-layered question you posed. You say these AI systems lack originality, but I think it’s not quite clear that they lack originality, primarily because we don’t necessarily have a very good definition of what it means to be original. At some level of description, these machines are doing exactly the same thing that people do, which is look at examples, learn patterns and then reproduce examples.

F: Now, in many cases, especially image generation, there’s a number of places where you can see that they’ve just cribbed large portions of an image from somewhere else, right? So there does seem to be much more obvious sort of what we would call, at least in an academic context, plagiarism. I think these systems will continue — people will continue making the system better, and it’ll become harder and harder to detect the kinds of copying.

F: What level is something copied? If I’m copying individual pixels from an image, does that make that copying? I mean, everything is made up of pixels. There’s going to be a big continuum of what counts as original and creative and then what counts as just rote reproduction that I think — as a society — we haven’t yet had to tackle because we haven’t had anything in that regime that could do things with that level of subtlety.

F: With the current generation of technologies we’re seeing, I don’t think we’re gonna see machines really replacing people. I think what we’re gonna see, and we’re already seeing, is people creating using these as tools. It’s going to make creative people more efficient, much more productive, but it’s also going to decrease or restrict the range of certain people. It’s gonna allow some people to come into the creative field who otherwise wouldn’t have been able to work in that because they didn’t have the technical ability to draw. So in some sense, it opens the doors to people to express creative visions, who otherwise wouldn’t do have been able to do that, or they would have had to hire a person to do it. And so they monetize their own vision. It’s going to make highly skilled artists even more productive. And we’re still just at the beginning of learning how to integrate these tools into artistic workflows.

H: Right.

F: But what these tools can’t do, though, is they can’t come up with broad artistic vision that an artist would have. The unity of a vision or some sort of overarching style. They don’t come up with reasons to do art in the first place, right?

H: Right.

F: They don’t do art because they like to do it or because they need to they do it. They do it because they get set up and prompted to do it. You still need people at the inputs and outputs of those processes. Ultimately, we’re not going to be creating this art for machines either. It’s going to be created for people by people, so people are going to be the ultimate judge of whether or not it’s useful or valuable. There’s plenty of space left for people in that field, and in fact, you can’t remove people from from the process at all.

F: Are people going to be replaced? People are going to be replaced at some degree, but that’s also a social and economic decision, right? To what degree are we going to allow people to be replaced as a society? Some of the conversations that we see currently, it’s almost like “oh, well, you know, machines can do everything that people can do. What do we need people for anymore?” Well, if you only value people for their economic output, that makes sense. That’s a question that you can ask. But most people don’t think of themselves as merely a cog in a machine or an economic output machine. There’s a quality of experience to human life. That’s why we’re creating these machines in the first place — it’s to improve human life. So if we end up eliminating people, what’s the point of having these machines? We as a society have to agree to what degree can allow machines to replace people? Where do we find that overall valuable to society, and where do we find that the benefits outweigh the drawbacks?

H: It’s surprisingly a very economic-centered perspective.

F: Yeah. And the language that they use to describe this is very revealing, right? So they talked about the AI taking jobs, not the people who own the AI taking jobs. It’s always a decision by the owner of a business to replace laborers with a machine, right? They are only allowed do that if they’re allowed by society. And we have such a default idea that, you know, business owners should be able to do whatever the heck they want, no matter how many people it harms or hurts. That’s the default position in America and it’s almost like we can’t break out of that mode. And so I thought it was really ironic that people like Mark Zuckerberg and —

H: Elon Musk.

F: — And Elon Musk are being interviewed by Congress about what AI is going to do to people, but it’s people like Musk and Zuckerberg and Bezos who are going to be replacing workers with AI.

H: What do you think it is about AI that makes people assume that, yes, they are going to be smarter than us — or already are.

F: *Laughs* That’s a question about why do people think the things that they do.

F: Our culture is permeated with fiction, and science fiction in particular, which has been exploring the positive and negative aspects of artificial intelligence as long as AI has been around. Early episodes of The Twilight Zone in the 1950s included artificial intelligent machines and explorations on what it meant to be human and what it meant to have emotion and whether or not something could to be truly alive or truly intelligent if it was built by a person. So these things are not new, and we have plenty of examples from our fantasy life. People have lots of examples to draw on analogically, so there’s plenty of evidence, so to speak, that people could point to and say, yeah, machines can be really, really smart. And I’m not saying that they can’t be or they won’t be here. I’m just saying that people — they already have that idea. That idea’s been around a long time.

H: Oh, yeah.

F: So it’s not necessarily all that surprising that a lot of people believe that.

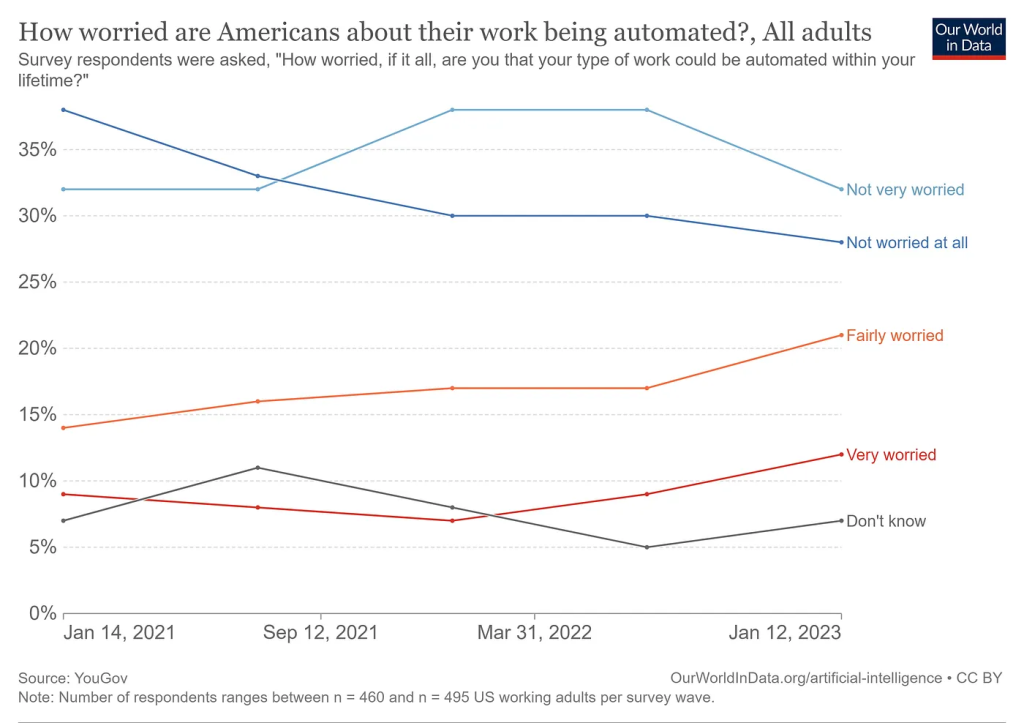

H: A YouGov survey discovered that over 50% of U.S. adults were either not very worried or not worried at all about losing their jobs to AI and automation. What do you think about that?

F: That’s kind of interesting, actually. Because there’s a lot of hype. You’re saying it’s really more like 50% are concerned and 30% aren’t, that actually doesn’t really match up with a lot of panic and worry that I see communicated in the mainstream media. That’s an interesting — interesting observation.

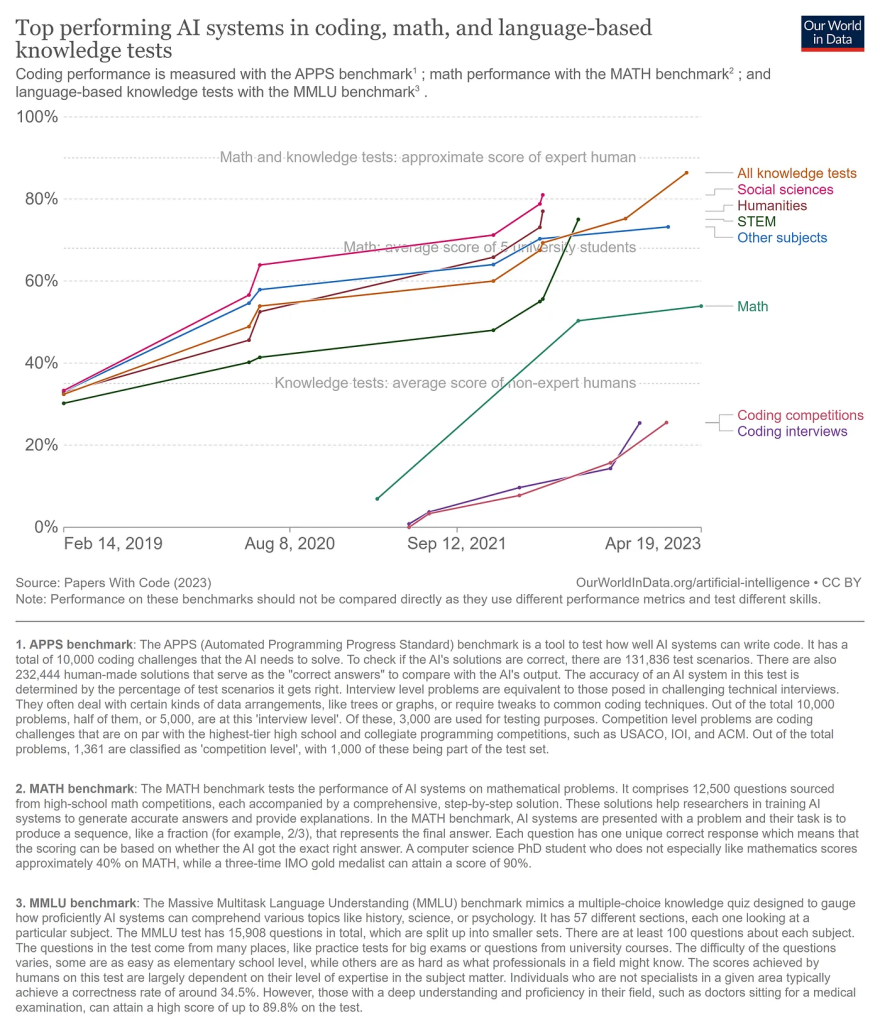

H: So, jumping backwards to what you were talking about before, about how AI does certain jobs well, but other jobs not as well, I found a study from Papers With Code that started in 2013. They ran AI through several like math, coding and language based knowledge tests of different levels of difficulty. And it found that in most of the categories, not all of them, of course, but in some of the categories like coding and math, they couldn’t reach the average scores of the college students that they collected to be the average in most categories. What are the factors that are holding AI back from reaching that human expert level of ability?

F: The history of AI is littered with people saying “well, AI can’t do this,” and then someone comes along with a system that can do whatever that is. Evidence that AI can’t do something right now doesn’t give you a lot. As transformer-based large language models — which is the technology that underlies ChatGPT — some people in the AI community will say what’s missing in that system is some sort of level of metacognition rather than just statistical associations. It doesn’t have a good sense of what’s right or wrong. It doesn’t have a sense of, like, grounded truth out in the world. So these are things that are missing from current systems. But researchers are aware that the systems are missing. People are working on integrating, figuring out how to make those systems do that kind of thing.

F: If we knew the answer to that question, we could do it and we would have solved the problem. So to some degree, the question is we don’t really know exactly what they’re missing. And that’s exacerbated by the fact that we don’t truly understand what’s going on inside of these models. They’re so large and so complicated, and it’s very hard to introspect them. I mean, it’s very hard to dive in there and say, “okay, this is why the machine — this is why machine did this,” right? We’re nowhere near the end.

H: Right, right. Yeah.

F: *Laughs*